In a bold leap into the future of content creation—and perhaps content chaos—Google has unleashed Veo 3, its most advanced video generation AI to date. The technology is capable of producing hyper-realistic video clips from nothing more than a few lines of text, and in just weeks, it has already transformed the face of online media. Or more accurately, it’s distorted it, reshaped it, and—according to many—deepfaked it beyond recognition.

Veo 3 represents a technical marvel. It can interpret prompts to create photorealistic scenes, animate faces with uncanny accuracy, and generate dynamic camera movements, ambient sound, and dialogue. With its cinematic-level polish and ease of use, the tool allows virtually anyone with a laptop and a little imagination (or none at all) to become a filmmaker overnight.

Predictably, the internet has responded in the way it often does—with an overwhelming flood of absurdity. YouTube has been inundated with AI-generated content that falls somewhere between comedy, chaos, and complete nonsense. Many of these videos fall into the category critics are calling “smooth-brained content”—a tongue-in-cheek term for overly simplified, low-effort videos optimized for algorithmic success rather than artistic merit.

A new generation of content creators is using Veo 3 to generate bizarre mashups, surreal reaction skits, fake interviews, and synthetic drama series. One popular video features a disturbingly realistic digital version of Elon Musk attempting stand-up comedy in a post-apocalyptic mall. Another revisits the infamous “Will Smith eats spaghetti” AI video, now rendered with eerily lifelike textures and full voiceover, reviving a meme that had mercifully faded from memory.

The tech’s viral success highlights a deeper issue. While Veo 3 was pitched as a creative tool for filmmakers, educators, and storytellers, its current trajectory suggests that the easiest path to virality is paved not with thoughtful narrative, but with novelty, chaos, and sheer volume.

This raises uncomfortable questions about authorship, creativity, and authenticity. With AI now capable of fabricating entire video performances complete with sound and dialogue, what becomes of real human expression? Who owns a performance generated using the likeness of a celebrity or public figure? And if audiences can no longer distinguish between a human and an AI, does it even matter?

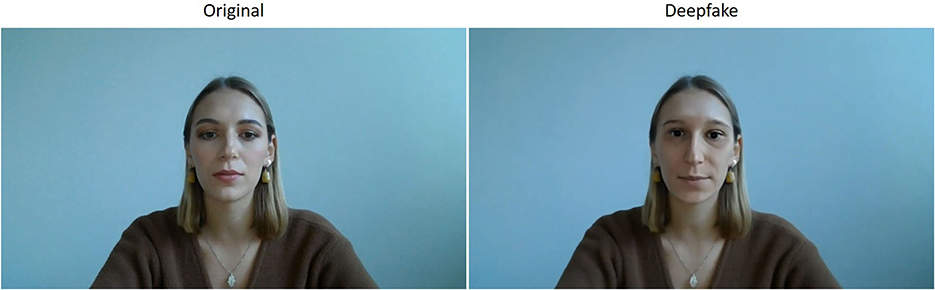

Privacy and ethical concerns have quickly followed. Veo 3’s capacity to imitate real people—especially public figures—has triggered alarms among digital rights activists. Although Google has integrated some protections, including watermarking and filters designed to prevent impersonation, the sheer power of the tool makes it ripe for misuse. Deepfakes, misinformation, and reputational damage are all on the table.

In response, YouTube is racing to adapt. The platform is reportedly developing AI detection tools to identify deepfakes and flag content that misuses someone’s identity. There are also plans to give creators more control over how their likeness is used by others. But the speed at which generative tools like Veo 3 are evolving makes regulation a game of catch-up.

Despite the chaos, some see opportunity. Independent creators and small studios are using Veo 3 to experiment with storytelling, short films, and educational content that would otherwise be impossible on a tight budget. There’s real creative potential here—if it can rise above the noise.

For now, Veo 3’s legacy is being written in real time. Whether it becomes a revolutionary tool for digital storytelling or just another means of flooding the internet with visual junk depends largely on how platforms, creators, and audiences choose to engage with it.

Until then, prepare to be deepfaked—whether you realize it or not.