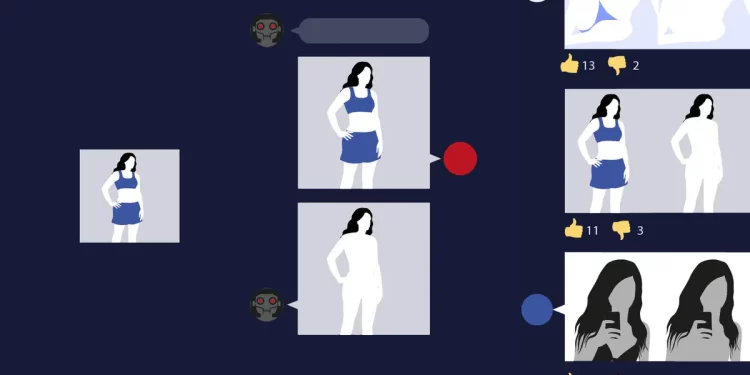

In a troubling development, a recent report has revealed that over 50 AI-powered bots on the messaging platform Telegram have amassed more than 4 million users, primarily utilized for creating deepfake images and videos of women and girls. This alarming trend raises significant ethical concerns about privacy, consent, and the potential for harassment in the digital landscape.

The report, released by digital rights organization CyberSafe, highlights how these bots leverage advanced artificial intelligence algorithms to generate hyper-realistic content that can be indistinguishable from genuine images. While deepfake technology has legitimate applications in entertainment and art, its misuse for creating non-consensual and often explicit content poses serious threats to the victims involved.

Experts warn that the proliferation of such bots could lead to increased instances of cyberbullying, harassment, and even identity theft. “The ability to create and distribute deepfake content with ease can have devastating consequences for individuals, particularly women and girls who are disproportionately targeted,” said Dr. Emily Torres, a leading researcher in digital ethics.

Telegram, known for its strong encryption and user privacy, has become a hotspot for these activities, as the platform’s structure allows users to remain relatively anonymous. While Telegram has policies against abusive content, the rapid growth of these deepfake bots presents challenges for monitoring and enforcement.

Victims of deepfake incidents often face emotional and psychological distress, as well as reputational damage. Many are unaware that their images are being manipulated and shared without their consent. Legal frameworks regarding deepfakes are still evolving, leaving many victims without recourse.

In response to the report, Telegram issued a statement emphasizing its commitment to combating harmful content on the platform. “We are actively working to enhance our detection systems and collaborate with experts to identify and mitigate the risks associated with deepfakes,” the company stated.

As awareness of the dangers posed by deepfake technology grows, advocates are calling for stronger regulations and better support for victims. “We need comprehensive laws that not only penalize the creation and distribution of non-consensual deepfake content but also provide resources for those affected,” said Sarah Lin, a policy analyst at CyberSafe.

The rise of AI-generated deepfakes highlights the urgent need for conversations about digital ethics, consent, and the responsibility of tech companies in safeguarding their users. As technology continues to advance, the challenge of ensuring safety and respect in online spaces remains paramount.